Argument in Academic Writing: A Systematic Scoping Review

Abstract

Background: Argumentative writing represents a core dimension of academic literacy within higher education; however, research concerning “argument,” “argumentation,” and “argumentative writing” remains dispersed across distinct disciplinary paradigms and commonly draws upon non-equivalent conceptual definitions and analytical methodologies. This fragmentation has practical consequences for teaching and assessment, particularly as technology-enhanced writing environments and AI-mediated support expand the range of tools used to scaffold and evaluate argumentation.

Purpose: To map the conceptual and methodological approaches to studying argument in academic writing in higher education, including definitions of argumentative writing, the argument models employed, the ways argument quality is operationalized, the dominant research directions, and the role of digital and AI-mediated environments in the teaching and assessment of argumentation.

Materials and Methods: The review followed PRISMA-ScR guidance and used a PCC framework to define the sources eligibility. A structured search was conducted in Scopus on 27 September 2025 using predefined Boolean queries covering argumentative writing, academic writing, and higher education; backward reference searching was also applied to identify additional relevant studies. Records were screened against inclusion and exclusion criteria, and data were charted in a standardised extraction form. The synthesis combined (a) bibliometric keyword co-occurrence mapping using VOSviewer to identify major thematic blocks and (b) expert thematic coding to interpret conceptualisations, models, and methodological patterns across the literature under analysis. Inter-coder agreement was established during iterative coding.

Results: Ninety-five sources were included in this review. Publication output increased after 2018, with the largest share of studies appearing around 2019–2020, and the evidence base was geographically concentrated in Asia and the Americas. Bibliometric mapping and expert synthesis converged on several recurring blocks: theoretical and definitional work on argument/argumentation in academic writing; pedagogical studies on teaching argumentative writing and related scaffolding; assessment-oriented research (rubrics, indicators of quality, and technology-supported evaluation); sociolinguistic and interlinguistic perspectives, especially in EFL/L2 contexts; and an emergent strand focused on digital writing environments, automated feedback, and AI-enabled support. Across the corpus, Classical, Toulmin-based, and Rogerian traditions function as influential modelling frameworks, but they are applied inconsistently, and operationalisations of argument quality vary substantially: most commonly privileging detectable structural elements over comparably stable measures of reasoning strength or epistemic integration.

Conclusion: The review shows that the development of research on argumentative writing in higher education is constrained not by a lack of studies, but by the absence of conceptual and methodological coherence. Differences in the definitions of argument, the models used for its analysis, and the approaches to assessing its quality limit the comparability of findings and complicate the translation of research insights into teaching and assessment practice. Under these conditions, the integration of argumentation theories with more robust and substantively oriented approaches to argument assessment becomes particularly important, especially against the backdrop of the growing automation of the structural aspects of writing in digital and AI-mediated environments.

Keywords: Argumentative writing, Academic writing, Higher education, Argumentation, Argument quality, Toulmin model, Rogerian argument, Assessment, Writing pedagogy, EFL writing, Digital writing environments, Artificial intelligence

Introduction

Argumentative writing has become a core component of academic literacy in higher education because it is one of the main textual forms through which students participate in disciplinary discussion and demonstrate reasoned judgement. In institutional settings, it is increasingly treated as a learning outcome precisely because it makes visible how writers justify positions, evaluate competing claims, and work with evidence in a transparent way (Goldman et al., 2016; Ozfidan and Mitchell, 2020; Pei et al., 2017; Sharadgah et al., 2019; Zhang and Zhang, 2021; Kaewneam, 2025). In academic writing, argumentation is realised through adopting a stance on a contestable issue and supporting it with claims, relevant evidence, and explicit reasoning (Rapanta and Macagno, 2019; Yasuda, 2023). At the same time, argumentative writing is not reducible to persuasion, since it also performs an epistemic function when writers use visual (Tikhonova and Mezentseva, 2025) and linguistic sources to qualify, support, and contest claims, thereby shaping stance and contributing to knowledge building (Ferretti and Fan, 2016; van Weijen et al., 2019; Yasuda, 2023).

Despite this shared educational importance, research on argumentative writing operates with noticeably different understandings of what counts as an argument and how argument quality should be described. Studies often address different aspects of the same phenomenon and then treat their results as comparable, even when the underlying construct focus is not aligned. This divergence is visible in how quality is operationalised. Structural features of claims and support, dialogic work with counterpositions, and rhetorical organisation across genres are invoked with different emphases and are sometimes treated as interchangeable indicators of competence (Stapleton and Wu, 2015; Yang, 2021; Lu and Swatevacharkul, 2021; Su et al., 2023). As a result, the field has developed a productive vocabulary, but commensurability across studies remains limited, and identical labels can conceal distinct analytical commitments (Ferretti and Fan, 2016; Allen et al., 2019).

Over the past decade, research on argumentative writing has expanded across institutional settings, including higher education, teacher education, multilingual classrooms, and academic literacy programmes, and it has increasingly moved into digitally mediated environments that shape both writing processes and the forms of instructional support available to writers (Newell et al., 2015; Cheong et al., 2021; De Castro Daza et al., 2022). At the same time, a growing body of work has turned to the interactional and evidential labour that academic writers are expected to perform, including anticipating readers’ objections, engaging alternative viewpoints, and producing rebuttals grounded in credible sources (Kibler and Hardigree, 2017; Sánchez-Peña and Chapetón, 2018; Hutasuhut et al., 2023). These shifts have made differences in constructs and methods more visible and, in practical terms, have raised the stakes of how the field defines what counts as a successful argument in academic writing.

As the number of works on the topic under discussion has increased, conceptual fragmentation has become increasingly apparent. Definitions of argument, argumentation, and argumentative writing diverge across applied domains. Some approaches prioritise reasoning processes and their development (Mulyati and Hadianto, 2023; Hu and Saleem, 2023), others foreground evidence use and rhetorical effectiveness (Shi et al., 2022; Uzun, 2024), and a further strand conceptualises argumentation in dialogic and sociocultural terms (Najjemba and Cronjé, 2020; De Castro Daza et al., 2022). This divergence is also reflected in the use of analytic frameworks. Classical, Toulmin-based, and Rogerian models are frequently cited, yet they are applied in non-equivalent ways, and the boundaries between their functions are not always made explicit (Stapleton and Wu, 2015; Zainuddin and Shammem, 2016). The problem is compounded by locally adapted coding schemes and context-specific instruments which may be well aligned with local educational aims but reduce comparability across studies (Lesterhuis et al., 2018; Paris et al., 2025). In this context, existing reviews have tended to remain restricted in scope and do not fully integrate definitional, methodological, and technology-mediated developments into a single map of the field (Amini Farsani et al., 2025).

Against this background, the task is not simply to compile findings but to reconstruct how the field is organised. This requires clarifying how central concepts are defined, how argument structure and function are modelled, and which recurring patterns shape research trajectories across traditions. The need for such reconstruction has become more acute as automated writing evaluation, argument mining, and AI-assisted writing have expanded and begun to influence both pedagogy and assessment (Wambsganss et al., 2020; Lawrence and Reed, 2020; Guo et al., 2022; Yang et al., 2023; Khampusaen, 2025). Digital environments enable scalable feedback and analytics. One of the fundamental questions discussed in literature is made more relevant, namely which aspects of argumentation are being supported and which are being measured when support is mediated by tools (Benetos and Bétrancourt, 2020; De Castro Daza et al., 2022). In parallel, cognitive, sociocultural, and multilingual strands continue to propose new typologies of reasoning and new approaches to evaluating argument quality, widening the conceptual space that must be mapped rather than assumed to follow a single developmental pathway (Mateos et al., 2018; Kleemola et al., 2022; Wang and Newell, 2025).

An integrative reconstruction is consequential for three groups of readers. For researchers, it clarifies which constructs and measures can be treated as comparable and where disagreements are better explained by non-equivalent operationalisations than by substantive theoretical conflict. For educators and programme designers, it makes visible what different models privilege in instruction and how those priorities translate into tasks and rubrics, whether the emphasis falls on textual organisation, warranting and evidence-based reasoning, or dialogic engagement with alternative positions (Rapanta and Macagno, 2019; Stapleton and Wu, 2015; Lu and Swatevacharkul, 2021). For scholars working in technology-enhanced writing, the map is necessary for aligning tool affordances with explicit theoretical commitments rather than treating digital support as conceptually neutral (Wambsganss et al., 2020; Lawrence and Reed, 2020; Guo et al., 2022).

In response, the present study conducts a systematic scoping review of research on argument in academic writing in higher education. The review treats argumentative writing as a genre-specific realisation of argumentation that brings together instructional, cognitive, and rhetorical dimensions, providing a coherent basis for mapping conceptual and methodological diversity across the field (Ferretti and Fan, 2016; Allen et al., 2019; Yasuda, 2023). Conceptually, the reconstruction is organised around four analytic axes, namely definitional choices, model selection, operationalisations of argument quality, and the mediation of instruction and assessment through digital and AI-enabled environments. The analytic stance is integrative and diagnostic. Rather than adopting a single model as a default, the review reads the literature as a set of partially overlapping traditions and examines where they converge, where they diverge, and which tensions persist, particularly in definitions, the use of argument models, and the operationalisation of argument quality (Najjemba and Cronjé, 2020; Shi et al., 2022; Lesterhuis et al., 2018; Paris et al., 2025). This position follows from the interdisciplinary character of the field and recognises that different traditions may capture different aspects of argumentative writing (Musa, 2019; Hutasuhut et al., 2023; De Castro Daza et al., 2022).

To maintain analytical tractability, the review has its eligibility criteria and is bounded to English-language publications from 2015 to 2025 that address higher-education academic writing contexts. These boundaries function as a principled delimitation for reconstructing how argument models, typologies, and quality dimensions are developed and applied within a defined segment of the literature. On this basis, the review addresses the following research questions:

RQ1. How is “argumentative writing” conceptualised in higher-education academic writing research, and which definitional components are treated as constitutive of the phenomenon?

RQ2. Which argument models and analytic frameworks are used to represent argument structure and relations in student academic writing, and how are these frameworks operationalised in empirical studies?

RQ3. How is argument quality operationalised across the field, and which dimensions and indicators are most commonly used in assessment and analysis?

RQ4. Which thematic and methodological patterns organise the mapped literature, including dominant research foci, study designs, and educational contexts?

RQ5. How do digital and AI-mediated environments shape the teaching, scaffolding, and assessment of argumentative writing, and how are technology-enhanced interventions aligned with the underlying argument constructs?

Method[1]

Transparency Statement and Protocol

We conducted a scoping review to map the existing literature on argumentative writing in higher education to address the research questions. The review aimed to delineate the scope of the research landscape, identify emerging evidence, and highlight knowledge gaps, thereby contributing to ongoing scholarly discourse and educational policy development. This review adhered strictly to PRISMA-ScR protocol (the Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews). Prior to commencing the study, a detailed protocol was developed and established. The authors confirm that this manuscript provides an accurate, thorough, and comprehensive account of the research conducted; it covers all key aspects of the study.

Eligibility Criteria

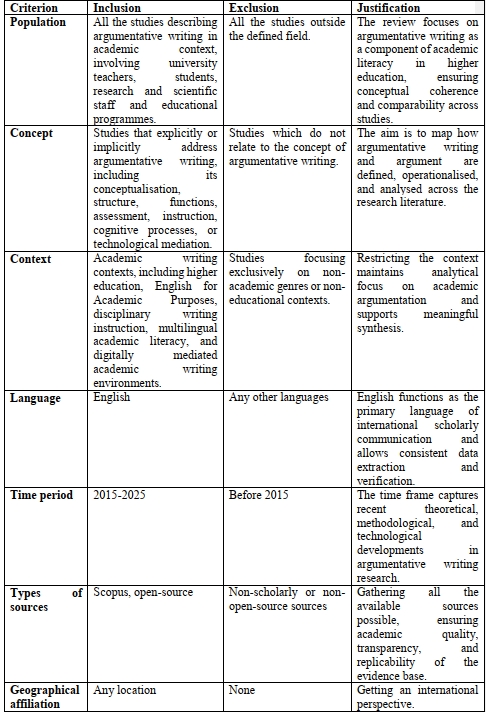

This scoping review followed a structured methodological framework comprising five key stages: (1) defining the research questions; (2) identifying relevant sources; (3) selecting studies for inclusion; (4) charting and extracting pertinent data; and (5) collating, summarizing, and reporting the results. Inclusion criteria were established using the PCC framework as recommended in PRISMA-ScR guidelines. Additional eligibility restrictions were applied based on language of publication, publication date range, geographical focus/affiliation of the first author or study setting, and document type (Table 1). The review encompassed various source types, including peer-reviewed journal articles, books, book chapters, conference proceedings/papers, and one unpublished master’s thesis.

Table 1. Eligibility Criteria

Таблица 1. Критерии отбора

Information Sources and Search Strategy

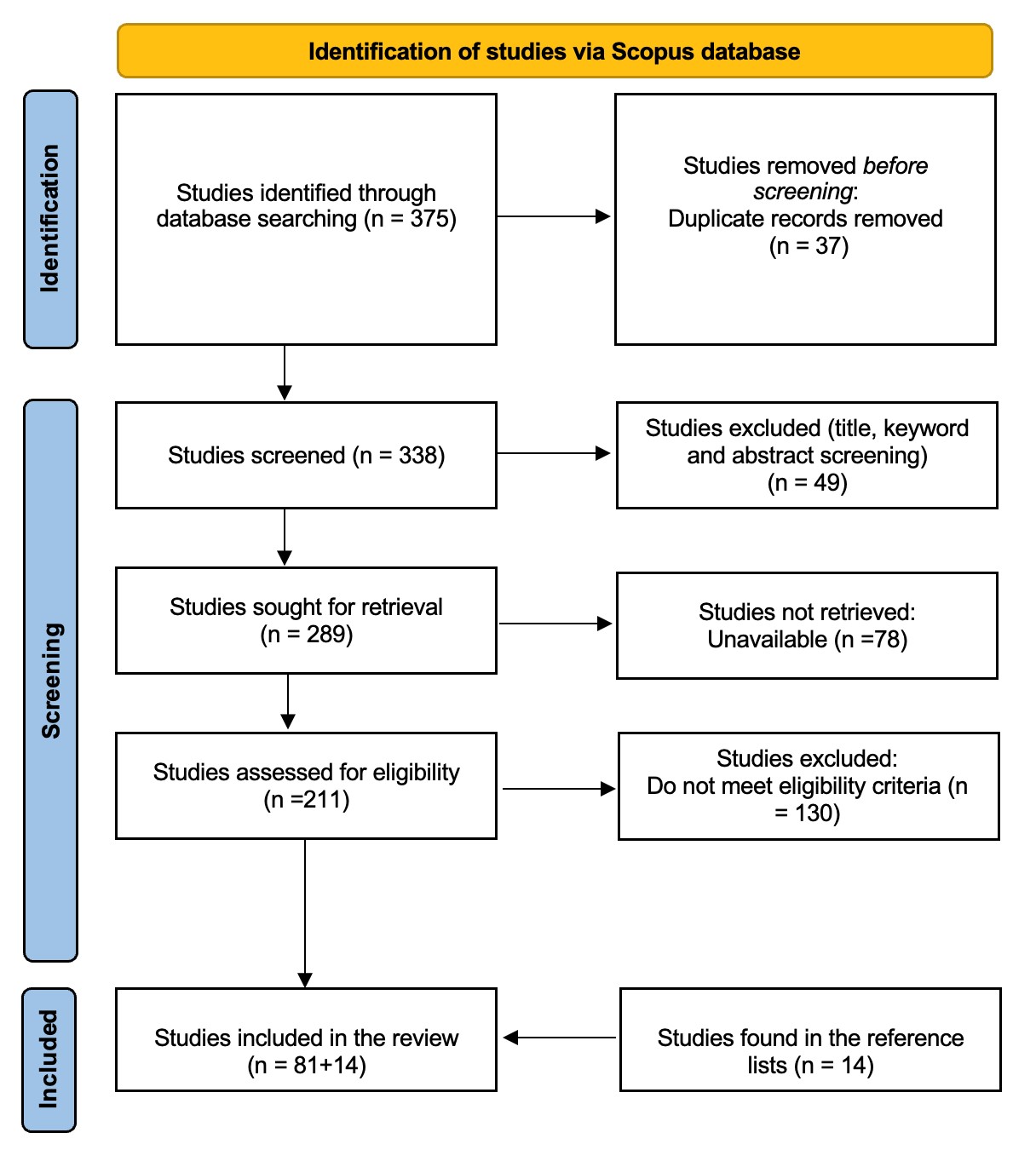

The literature search was performed in Scopus database. Adherence to the PRISMA-ScR protocol is depicted in the flow diagram (Figure 1). A preliminary search (n = 375 records identified) informed the selection and refinement of search terms and index terms pertinent to argumentative writing in higher education contexts. The final search strategy, incorporating these optimized terms, was run on September 27, 2025. The search terms obtained and refined by consulting relevant publications connected with the topic of interest of this study were combined using Boolean operators (OR, AND or NOT) and truncation symbols. The search entries were the following:

- TITLE-ABS-KEY ("argumentative writing" OR "argument writing" OR "written argument*" OR "academic argument*");

- TITLE-ABS-KEY ((argument* OR reasoning) AND ("academic writing" OR "university writing" OR "higher education writing"));

- TITLE-ABS-KEY (("argumentative writing" OR "written argument*" OR "academic argument*" OR argument*) AND ("academic writing" OR "higher education" OR university OR EAP) NOT (law OR legal OR court OR medical OR clinical OR debate OR speech OR politic* OR "high school" OR K-12));

- TITLE-ABS-KEY ((argument* AND writing AND (university OR "higher education")) NOT (debate OR speech OR "oral argument*" OR rhetoric*));

- TITLE-ABS-KEY (("argumentative writing" OR argument*) AND ("academic writing" OR university OR "higher education") NOT (primary OR elementary OR "middle school" OR "high school" OR K-12)).

81 studies were found in the Scopus database. Furthermore, the reference lists of the selected studies were examined to identify additional revelant research. From this search, 14 studies were retrieved (as shown in Figure 1).

Figure 1. PRISMA-ScR Protocol

Рисунок 1. Протокол PRISMA-ScR

Selection of Evidence Sources

The references retrieved from the search were imported into a Zotero library for organization. Duplicate records were identified and removed using the reference management software's built-in deduplication function. Two independent reviewers screened the deduplicated library in sequential phases: (1) title, keyword, and abstract screening against the predefined inclusion and exclusion criteria; (2) full-text evaluation of potentially eligible sources. Consensus discussions were held after each phase to resolve any discrepancies regarding eligibility. Persistent disagreements were adjudicated by a third reviewer.

All duplicate records were removed prior to screening. During the title, keyword, and abstract screening stage, 49 sources were excluded as they fell outside the scope of argumentative writing. Of the remaining 289 sources, 78 were excluded due to unavailability of full text. Full-text review of the 211 accessible sources resulted in the exclusion of 130 that did not meet the inclusion criteria, leaving 81 sources for initial inclusion. An additional 14 relevant sources were identified through backward citation searching (hand-screening of reference lists from included studies). Ultimately, 95 sources were included in this scoping review (see Appendix 1 for the complete list).

Data Charting Process

Data charting was conducted in duplicate and independently by two reviewers, with one reviewer performing initial extraction and the second conducting verification of all entries. Any inconsistencies were resolved via consensus discussions. A pre-defined, standardized Excel-based charting template was used to extract and organize pertinent data from included sources. Charted variables encompassed: author names and institutional affiliations; year of publication; document objectives and summary description; definitions of argumentative writing; argument classification schemes; argument structures/models; research trends in argumentative writing; and considerations of digital contexts and assessment. This methodical process supported a rigorous descriptive synthesis of the evidence in relation to the review's aims.

Summarising and Reporting the Results

Following data charting, the reviewers synthesized findings related to key aspects of “argument” identified in the sources. Given the observed terminological inconsistency during screening, a dedicated analysis of definitions of “argument” and “argumentative writing” was performed to identify shared core characteristics, ultimately informing a consensus operational definition. Definitions were systematically compiled into numbered Microsoft Word documents.

Thematic analysis adhered to the reflexive approach described by Braun and Clarke (2006), involving: familiarization with the data; independent and collaborative code generation and refinement; development of candidate themes; independent application of codes/themes by both coders; and iterative review to achieve consensus. Inter-coder reliability reached >93% agreement on themes, codes, and extracts. Disagreements were resolved via detailed discussion, leading to code/theme adjustments and a confirmatory recoding round. The same structured coding procedure was applied to synthesize evidence on causes and consequences of superfluous verbiage in academic texts, as documented in the reviewed literature.

Results

Demographic Characteristics of the Selected Sources

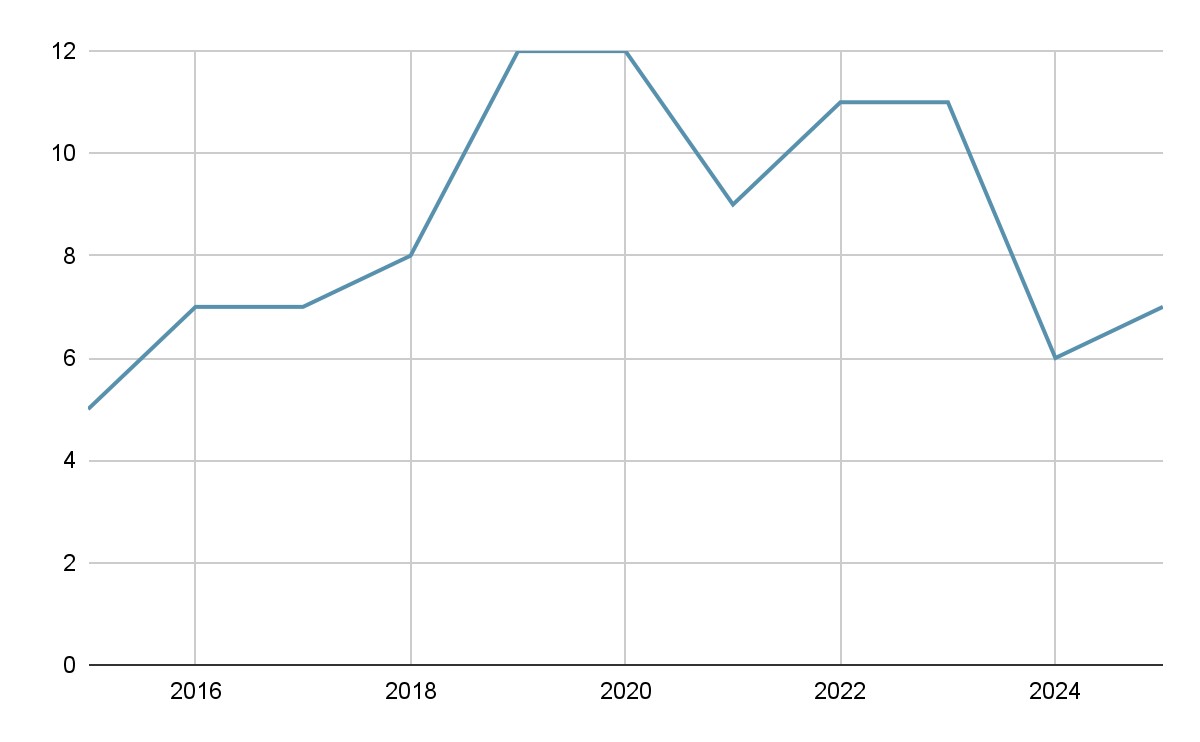

Publication Years of the Included Sources

An analysis of 95 selected studies reveals a clear developmental trajectory in academic writing research over the past decade (Figure 2). In the early period (2015-2017), publication output was relatively modest, slowly increasing during these years, indicating the consolidation of foundational research themes. This upward trend continued into 2018, suggesting growing scholarly engagement with academic and argumentative writing.

A marked expansion occurred in 2019 and 2020, when the number of studies peaked at twelve per year. This surge reflects intensified interest in cognitive, pedagogical, and technological dimensions of academic writing, including assessment, instructional interventions, and digital tools. Although output declined slightly in 2021, productivity remained consistently high in 2022 and 2023, demonstrating sustained research momentum and diversification of methodological approaches.

In the most recent years, 2024 recorded six studies and 2025 seven studies. This apparent decrease is best interpreted cautiously, as it may reflect incomplete indexing and publication delays rather than a substantive reduction in scholarly activity. Overall, the longitudinal pattern suggests that academic writing has evolved into a mature and stable research field, characterized by strong growth after 2018, a productivity peak around 2019-2020, and continued high-level output in subsequent years.

Figure 2. Yearwise distribution of the sources

Рисунок 2. Распределение источников по годам

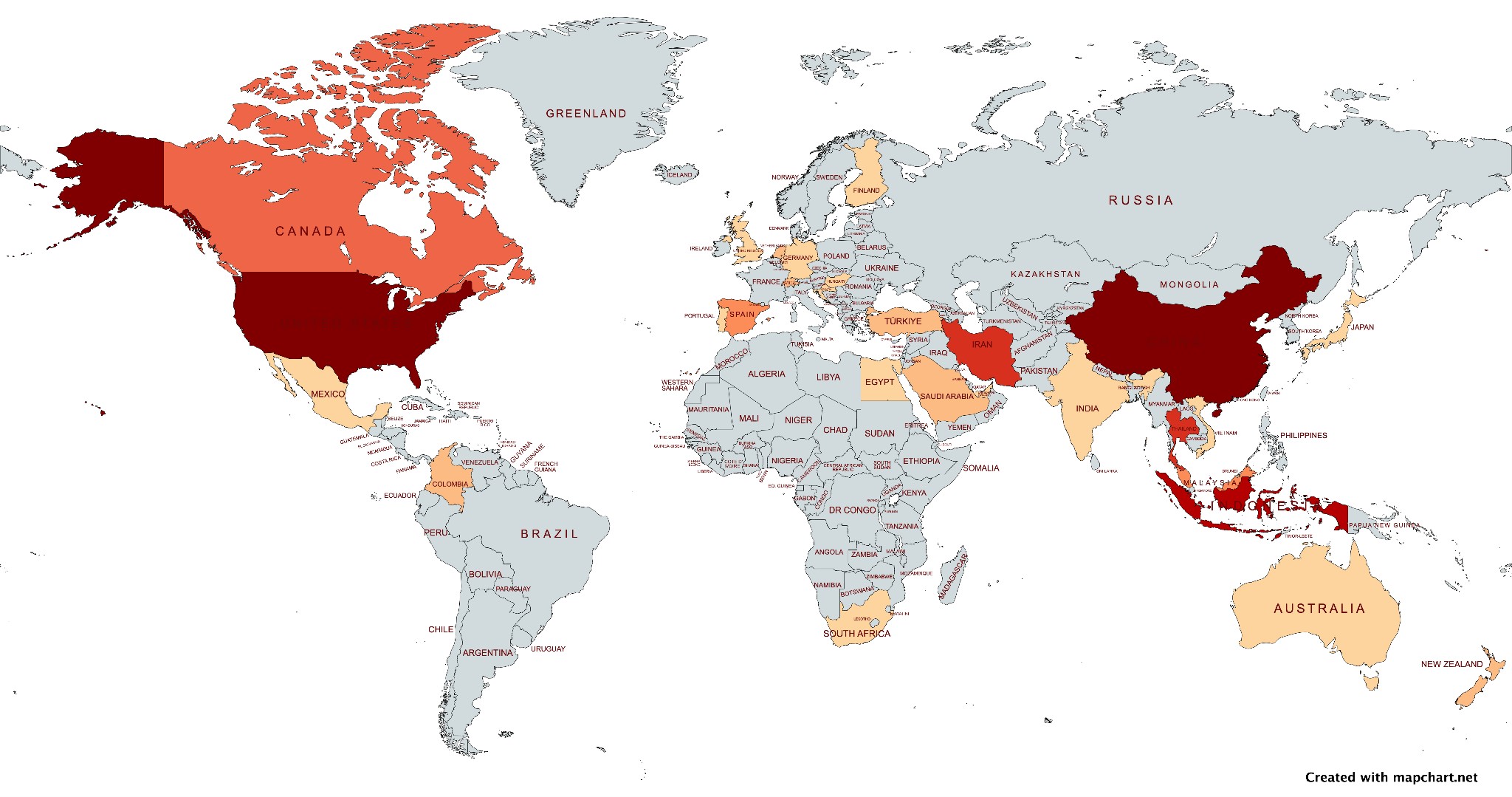

Geographic Affiliation of the Sources’ Authors

The geographic distribution (Figure 3) of authors’ affiliations of the selected studies, made using MapChat software, shows the density map (dark red represents higher output; light orange shows lower output). The clearest pattern is the dominance of China and the United States, which are tied as the most frequent affiliations (18 studies each). A second tier of contribution is led by Indonesia (10), which appears notably prominent in Southeast Asia. Several countries form a mid-level group, including Iran and Thailand (6 each) and Canada (4), producing visible but less intense shading. Additional contributions are more dispersed: Malaysia and Spain contribute 3 affiliations each, while a set of countries, including the Netherlands, Switzerland, Turkey, Saudi Arabia, Colombia, and New Zealand, appear at 2 affiliations each. Many other countries occur only once, such as Australia, Japan, India, Germany, Finland, Mexico, Portugal, South Africa, Egypt, the UK, the UAE, Vietnam, Belgium, Croatia, and Hungary, which corresponds to very light shading or minimal visibility on the map.

Figure 3. Geographic distribution of the sources

Рисунок 3. Географическое распределение источников

Regionally, the pattern indicates a strong concentration in Asia (53.7% of affiliations), followed by the Americas (26.3%), with Europe (14.7%) contributing across many countries but in smaller, fragmented amounts. Oceania (3.2%) and Africa (2.1%) are comparatively limited. Research activity is concentrated in a few key countries (China and the USA) supported by a smaller but substantial cluster in Southeast Asia, while much of the rest of the world shows scattered, low-frequency contributions to the topic.

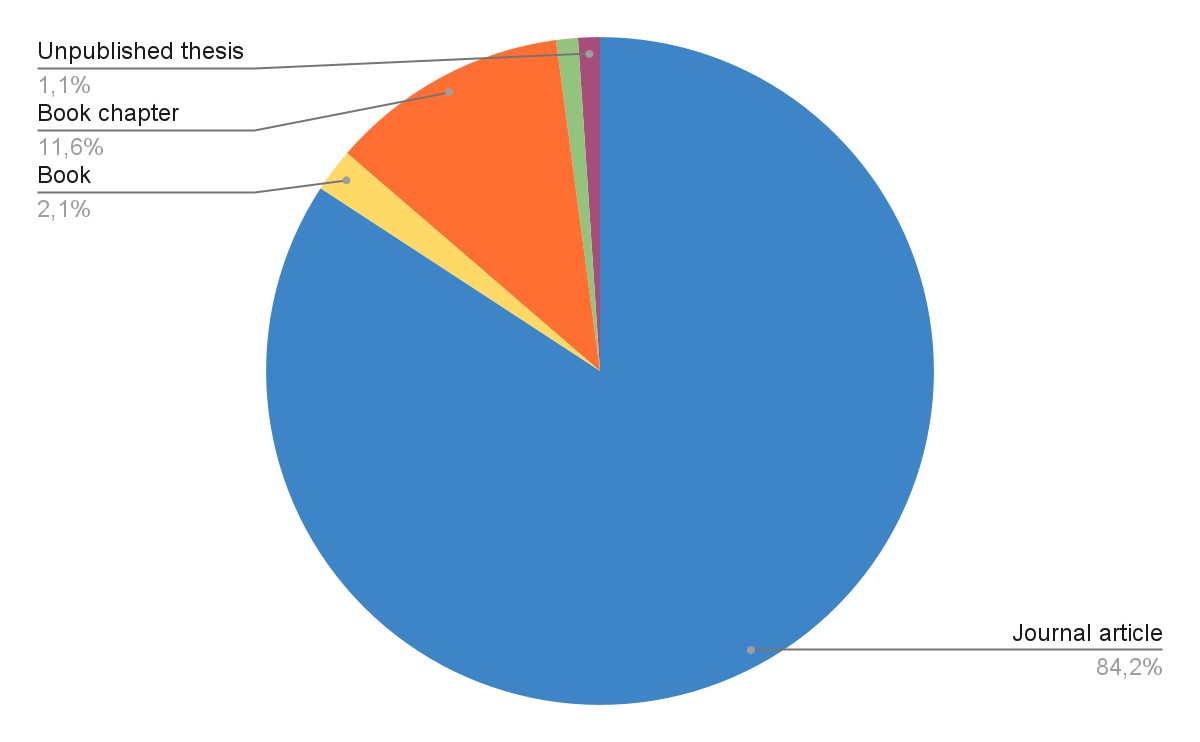

Types of the Selected Sources

An analysis of the publication types in the selected sources shows a strong dominance of journal articles, which account for approximately 84%. This indicates that research on argumentative writing is primarily disseminated through peer-reviewed journals, reflecting a mature and actively publishing research field. Book chapters are represented by about 12%, suggesting a secondary but still meaningful contribution from edited volumes, typically used for theoretical consolidation, methodological discussion, or pedagogical synthesis. Books are only around 2%, which implies that monographic treatments of argumentative writing are relatively rare compared with article-based dissemination. Only one conference paper (approximately 1%) and one unpublished master’s thesis (approximately 1%) are present, indicating that grey literature and preliminary research outputs play a minimal role in this corpus (Figure 4).

Figure 4. Publication types of the sources

Рисунок 4. Типы выбранных источников

The distribution underscores that research on argumentative writing in academic settings relies primarily on journal-based sources, with books and chapters providing supplementary theoretical or pedagogical contributions. This pattern reflects a mature, empirically grounded research area where peer-reviewed articles serve as the central vehicle for knowledge accumulation and dissemination.

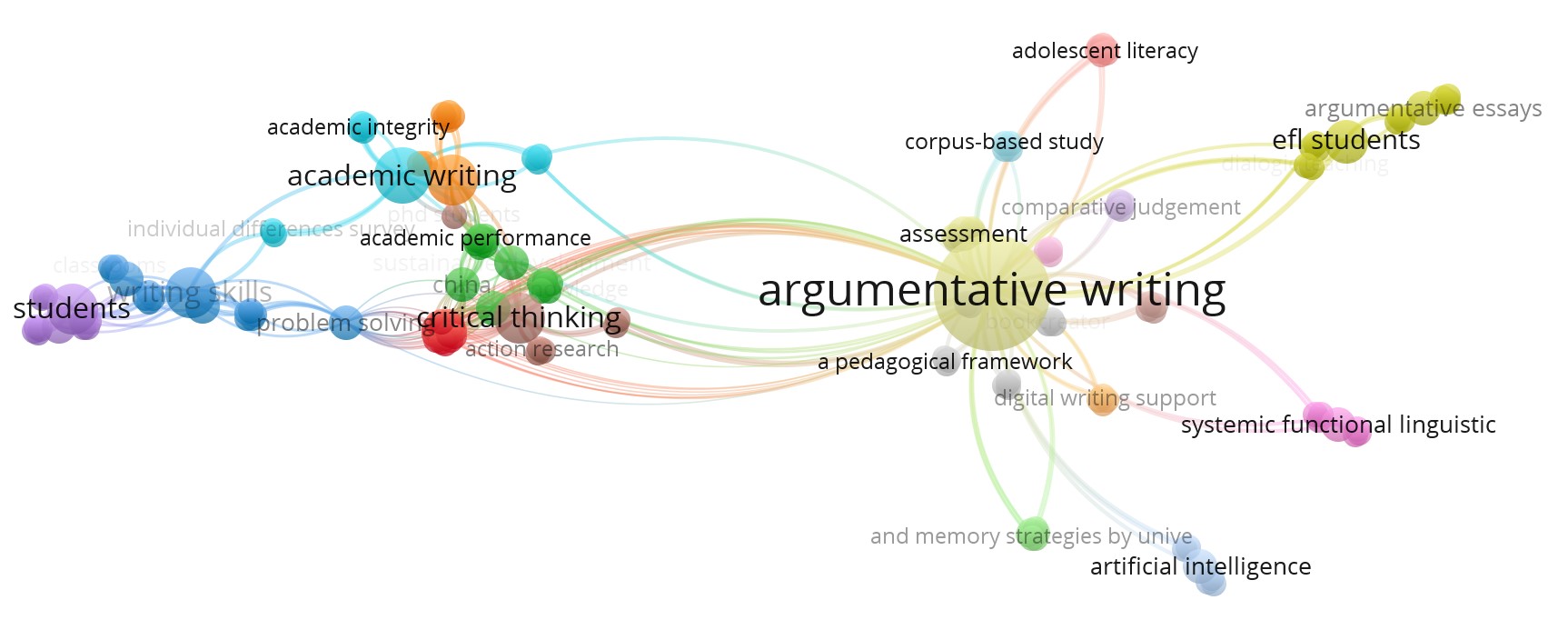

Clusters by VosViewer

To obtain an initial overview of the research space, we mapped keyword co-occurrences in the selected corpus using VOSviewer (Figure 5). Each colour represents a separate cluster obtained through modular clustering, based on the frequency with which keywords co-occur in the selected publications. The size of each node corresponds to the number of times the keyword appears in the corpus, while the thickness of each line indicates the strength of the connection between two terms. Nodes in the centre of the network are more connected to other terms, while those on the periphery are relatively isolated. The software produced 22 clusters, reflecting the thematic dispersion of the literature and the tendency of keywords to form small, locally dense groupings. Because VOSviewer relies on co-occurrence rather than semantic proximity, the resulting structure is informative as a first-pass map but too granular for analytic synthesis. For this reason, we use the VOSviewer output as an exploratory baseline and then consolidate clusters into four higher-order clusters guided by semantic relatedness, shared research tasks, and established classifications in argumentation research (Appendix 2).

Figure 5. Distribution of the clusters based on the co-occurrence network of words in the VosViewer program

Рисунок 5. Распределение кластеров на основе сети совместного появления ключевых слов в программе VOSviewer

Figure 5 therefore serves as a visual point of departure for the subsequent reconstruction, rather than as an analytic classification in its own right. The dispersion of terms reflects how VOSviewer constructs clusters: keywords are grouped by co-occurrence patterns rather than by semantic proximity or field-specific conceptual relations. This procedure produces a useful exploratory map, but it can also yield an overly fine-grained structure in which conceptually adjacent topics are distributed across multiple small clusters. Accordingly, we do not interpret the 22 clusters as analytic categories. Instead, we consolidate them into four enlarged thematic clusters (Appendix 2). The consolidation is guided by three criteria: semantic proximity of keywords as indicators of a shared research direction, unity of the research task, and consistency with established classifications and typologies in argumentation research. As a result, four conceptually coherent clusters were identified: (1) Theoretical and methodological foundations of argumentation in academic writing; (2) Evaluation and analysis of argumentative writing; (3) Sociolinguistic and interlinguistic aspects; (4) Cognitive-psychological and educational determinants of argumentation.

This approach made it possible to eliminate the excessive fragmentation of automatic clustering and present the structure of the research field in a more coherent and analytically appropriate form. In the future, these enlarged blocks will be compared with clusters identified manually on the basis of expert analysis of publications with annotations.

Cluster 1. Theoretical and Methodological Foundations of Argumentation in Academic Writing[2]

This cluster covers studies in which argumentation is viewed as a rhetorical-discursive phenomenon embedded in the system of academic writing and described through the prism of established theoretical and methodological approaches. The key concepts academic writing (Ferretti and Fan, 2016; MacArthur and Graham, 2016; Uzun, 2024), academic integrity (Goldman et al., 2016; van Weijen et al., 2019; Deane et al., 2019), Toulmin argument structure (Ferretti and Fan, 2016; Zainuddin and Shammem, 2016; Rapanta and Macagno, 2019; McCarthy et al., 2022), argument quality (Stapleton and Wu, 2015; Lesterhuis et al., 2018; Allen et al., 2019), argumentation (Newell et al., 2015; Zhang, L., 2016; Lawrence and Reed, 2020; Yasuda, 2023; Kaewneam, 2025), critical thinking (Pei et al., 2017; Sharadgah et al., 2019; Raj et al., 2022; Hu and Saleem, 2023; Wang and Newell, 2025), appraisal theory (Yang, 2016; Hatipoğlu and Algı, 2018; Papangkorn and Phoocharoensil, 2021), systemic functional linguistics (Zhang, X., 2019; Zhang and Zhang, 2021; Uzun, 2024), which indicates the considerable theoretical depth of the field.

Several sub-areas can be identified within this cluster. The first is related to the adaptation of classical models of argumentation (in particular, Toulmin's structure) to the teaching of written language in an academic context (Newell et al., 2015; Weyand et al., 2018). The second involves the integration of critical thinking and metacognitive strategies into the process of developing argumentative competencies, which is reflected in the active use of the concepts of metacognition and critical thinking (Newell et al., 2015; Mateos et al., 2018; Arroyo González et al., 2020; Lu and Swatevacharkul, 2021). The third is the application of appraisal theory (Martin and White, 2005; Yang, 2016) and systemic functional linguistics (SFL) to analyze the pragmatic and interpersonal functions of argument in academic texts (Stapleton and Wu, 2015).

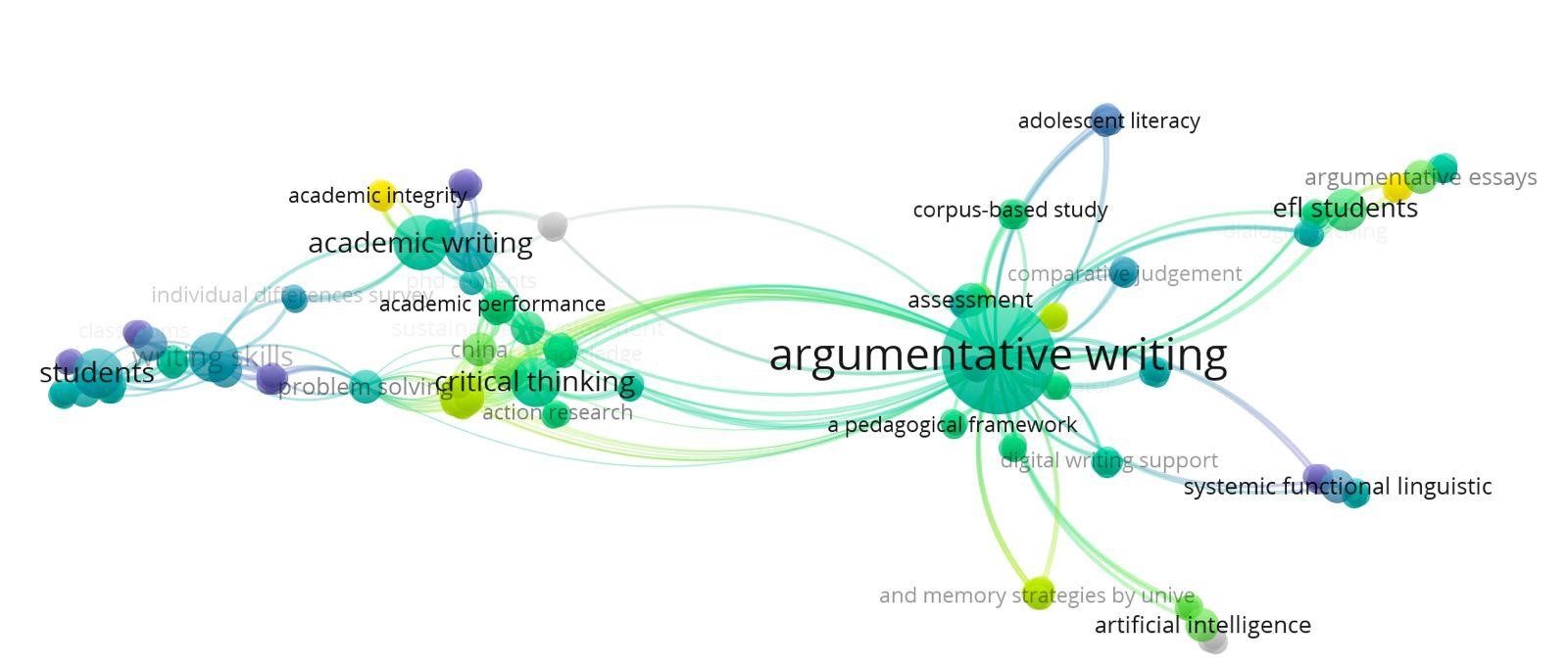

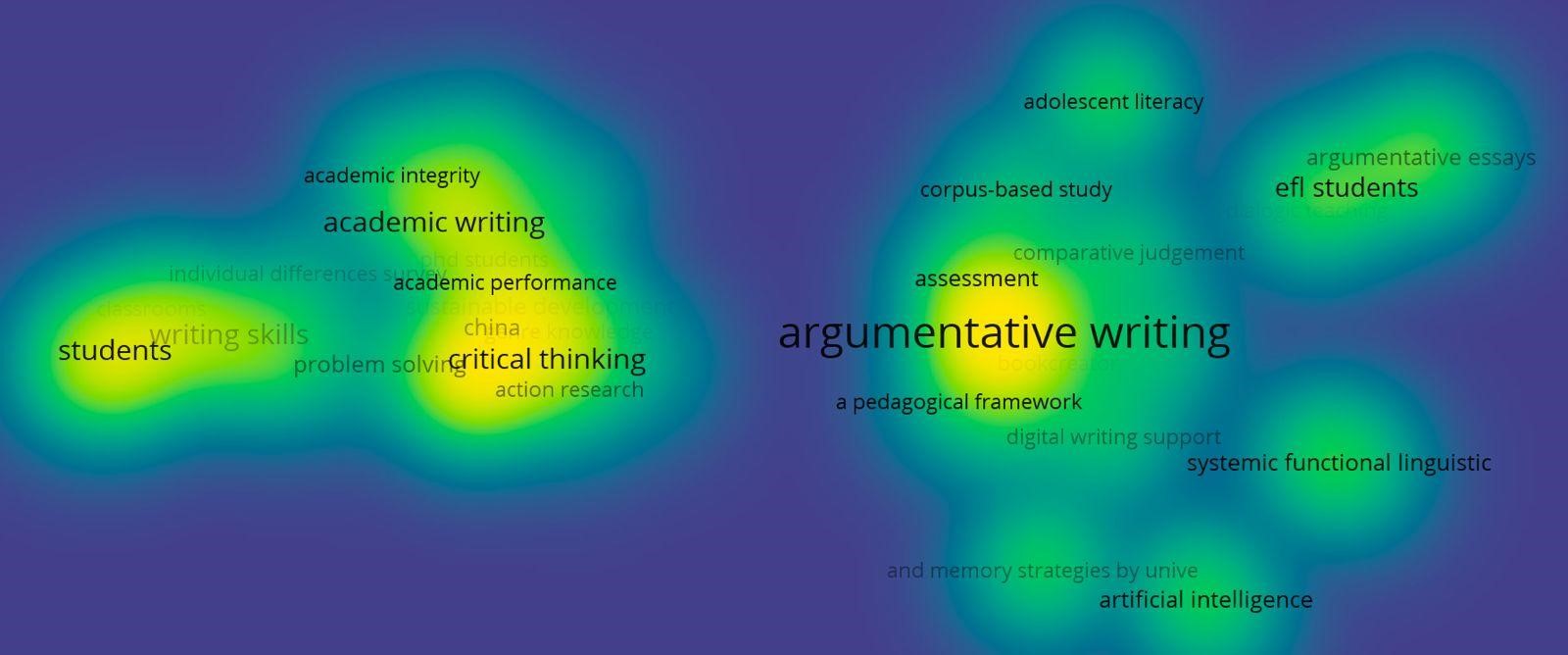

To characterise the internal dynamics of Cluster 1, we interpret the VOSviewer research timeline and density maps (Figures 6-7). The color scale in Figure 6 shows the average publication year of the works in which the keyword appears: purple and blue shades indicate earlier studies (2016-2019), light blue and greenish shades indicate the middle period (2020-2022), and yellow and light green shades indicate the most recent studies (2023-2025). The timeline depicts that academic writing, academic integrity, Toulmin-oriented argument structure, and critical thinking constitute a stable conceptual core across the period, with early consolidation visible from 2016-2018. In 2020-2022, the thematic profile broadens to include argument quality, metacognition, and appraisal theory. In 2023-2025, the timeline shows stronger coupling to digitally mediated and intercultural contexts, suggesting that these contexts have become more tightly connected to the established core.

Figure 6. Distribution of research topics on argumentative writing over time (2016-2025) according to VOSviewer visualization data

Рисунок 6. Распределение тем исследований по аргументативному письму по годам (2016-2025 гг.) согласно данным визуализации VOSviewer

The density map (Figure 7) shows high saturation in the same area, indicating sustained attention and frequent co-occurrence of the corresponding keywords within the mapped corpus. The colored areas reflect the density of keyword co-occurrence: the brighter and warmer the color (yellow, light green), the higher the concentration of publications on the topic; areas in cooler shades (blue, purple) indicate a lower frequency of the term in the sample and lower research saturation of the topic. The timeline and density views indicate that, within the mapped corpus, foundational constructs such as academic writing, Toulmin-oriented structure, and critical thinking form a stable conceptual core, with later years showing stronger coupling to digitally mediated and intercultural contexts. This shift adds new contexts of application and new methodological entry points.

Figure 7. Density of research on argumentative writing topics based on mapping the co-occurrence of keywords in VOSviewer

Рисунок 7. Плотность исследований по темам аргументативного письма на основе картирования совместного появления ключевых слов в VOSviewer

Cluster 2. Assessment and Analysis of Argumentative Writing[3]

This cluster focuses on developing, validating and applying tools for assessing argumentative writing, and on using digital technologies to support and analyse written texts. Key concepts include assessment (Lesterhuis et al., 2018; Rapanta and Macagno, 2019; Deane et al., 2019), rubric development (MacArthur et al., 2019; Ozfidan and Mitchell, 2022), learning outcomes (Hisgen et al., 2020; Luna et al., 2020; Hu and Saleem, 2023), comparative judgement (Stapleton and Wu, 2015; Lesterhuis et al., 2018), text assessment (Allen et al., 2019; McCarthy et al., 2022), validity (Lesterhuis et al., 2018; Ozfidan and Mitchell, 2022), digital writing support (Benetos and Bétrancourt, 2020; Jin et al., 2020; Guo et al., 2022; Shi et al., 2022), technology-enhanced learning (Lam et al., 2018; Latifi et al., 2020; Wambsganss et al., 2020), corpus-based study (Allen et al., 2019; Yang J. et al., 2023), interactional metadiscourse (Papangkorn and Phoocharoensil, 2021; Al-Otaibi and Hussain, 2024).

Research within this cluster addresses two key objectives. First, it focuses on the development of objective and reliable procedures for assessing argumentative writing (Wambsganss et al., 2020; Cheong et al., 2021), including the design of analytic scoring schemes and rubrics and the empirical examination of their validity and interpretability (e.g., Abdollahzadeh et al., 2017; Deane et al., 2019; Ozfidan and Mitchell, 2022; Stapleton and Wu, 2015). These studies analyse how textual features such as claims, evidence, counter-arguments, and engagement markers are operationalised in assessment criteria and how rater judgements align with theoretical models of argument quality.

Second, this body of research investigates the integration of digital and technology-enhanced tools into both assessment and instruction. This includes the use of corpus-based analysis to examine patterns of support and counter-arguments in learner writing (McCarthy et al., 2022), scenario-based and automated assessment approaches (Deane et al., 2019), and digital authoring and feedback systems designed to scaffold argumentative processes (Benetos and Bétrancourt, 2020; Maharani and Santosa, 2021). More recent studies explore AI-mediated and chatbot-supported writing environments, demonstrating their potential to provide real-time scaffolding, formative feedback, and enhanced learner engagement in argumentative writing tasks (Guo et al., 2022; Guo et al., 2023; Su et al., 2023; Khampusaen, 2025).

Across the mapped studies, technology-mediated support is not distributed uniformly across modelling traditions. Work situated in assessment and analytic coding most often draws on Toulmin-oriented componential logic and is commonly implemented in technology-enhanced formats that foreground structured reasoning and feedback, including blended and gamified designs (Lam et al., 2018; Jin et al., 2020; Wambsganss et al., 2020; Shi et al., 2022; Mulyati and Hadianto, 2023). By contrast, research discussing digital authoring and online training tends to treat support at the level of general writing environments rather than framing it around Classical arrangement as an explicit model-specific design principle (Benetos and Bétrancourt, 2020; Luna et al., 2020; Maharani and Santosa, 2021). Studies with a dialogic orientation increasingly report AI-mediated and chatbot-supported scaffolding that prompts writers to articulate alternative positions and revise toward more dialogic formulations (Najjemba and Cronjé, 2020; De Castro Daza et al., 2022; Guo et al., 2022; Guo et al., 2023; Su et al., 2023; Khampusaen, 2025).

The early stage (2016-2019) involves assessment, rubric development, learning outcomes, validity and a corpus-based study. From 2020 to 2022, the focus shifted to comparative judgement and interactional metadiscourse. From 2023 to 2024, there is active development of digital writing support and technology-enhanced learning. This area (centre-right) has one of the highest densities on the heatmap, especially around argumentative writing and assessment. This reflects the intensive research efforts and inter-cluster connections that make assessment a central element of the field.

Cluster 3. Sociolinguistic and Interlinguistic Aspects of Argumentation[4]

This section covers research examining argumentation in the context of interlingual interference, cultural and linguistic differences, and the specifics of teaching English as a second or foreign language. The key terms identified in this section are EFL students (Abdollahzadeh et al., 2017; Tankó and Csizér, 2018; Ghanbari and Salari, 2022; Almashour and Davies, 2023; Mallahi, 2024), argumentative essays (Stapleton and Wu (2015; Setyowati et al., 2017; McCarthy et al., 2022; Geng et al., 2024), second language writing (Kibler and Hardigree, 2017; Rubiaee et al., 2019; Cheong et al., 2021; Cvikić and Trtanj, 2022), bilingual advantage (Hsin and Snow, 2017; van Weijen et al., 2019), language minority (Goldman et al., 2016; Ozfidan and Mitchell, 2020; Thompson, 2021), first language (van Weijen et al., 2019; Cheong et al., 2021; Cvikić and Trtanj, 2022), second language (Hu and Li, 2015; Abdollahzadeh et al., 2017; Hatipoğlu and Algı, 2018; Papangkorn and Phoocharoensil, 2021), source use behavior (Kibler and Hardigree, 2017; Cheong et al., 2021; Shi et al., 2022), dialogic teaching (Weyand et al., 2018; Musa, 2019; Najjemba and Cronjé, 2020; De Castro Daza et al., 2022), language development (Hsin and Snow, 2017; Rubiaee et al., 2019; Cheong et al., 2021; Uzun, 2024).

Research in this field has examined how a student's first language and cultural background can influence the structure of arguments in English academic writing (Hatipoğlu and Algı, 2018; Amini Farsani et al., 2025). Important topics include dialogic teaching (Su, 2023) and mediative practices aimed at developing argumentative skills in bilingual and multilingual students (Mercer and Littleton, 2007). Particular attention is given to the specifics of source use behaviors and academic intertextuality in second language (L2) writing (Hatipoğlu and Algı, 2018).

Early topics (2016-2019) – EFL students, argumentative essays, language development, dialogic teaching. Period 2020-2022 – source use behavior, bilingual advantage, language minority. In 2023-2025 – perspective taking, sociocognitive development. On the density map, this zone (top right) is less concentrated than Clusters 1 and 2, reflecting greater thematic dispersion and a relatively smaller volume of publications, although within the EFL subgroup the density is higher than in other L2 areas.

Cluster 4. Cognitive-psychological and Educational Determinants of Argumentation[5]

The fourth cluster covers studies in which argumentation in academic writing is analysed through the prism of general cognitive, psychological, and educational factors. Key concepts include critical thinking disposition (Zainuddin and Shammem, 2016; Pei et al., 2017; Widyastuti, 2018; Nguyen and Nguyen, 2020; Hu and Saleem, 2023), cognitive processes (Klein et al., 2016; Mateos et al., 2018; Lamb, Hand and Yoon, 2019; Allen et al., 2019; Wang and Said, 2024), self-regulation (Latifi et al., 2020; Hisgen et al., 2020), problem solving (Ferretti and Fan, 2016; Nesbit et al., 2019; Davies, Barnett and van Gelder, 2021), academic performance (Rubiaee et al., 2019; García et al., 2020; Luna et al., 2020; Lu and Swatevacharkul (2021), motivation (Lam, Hew and Chiu, 2018; Najjemba and Cronjé, 2020; Hutasuhut et al., 2023), learning disabilities (MacArthur et al., 2019; Hisgen et al., 2020), reading comprehension (Goldman et al., 2016; Kibler and Hardigree, 2017; van Weijen et al., 2019), syntax (MacArthur et al., 2019; Cvikić and Trtanj, 2022), grammar (Hu and Li, 2015; Hatipoğlu and Algı, 2018), and writing skills (Ferretti and Lewis, 2019; Newell et al., 2015; Setyowati et al., 2017; Mallahi, 2024; Ilyas and Arifin, 2025).

Some studies often go beyond analyzing argumentation as a separate skill, considering it in relation to overall academic literacy, language competence, and cognitive development (Ferretti and Fan, 2016; Goldman et al., 2016; Newell et al., 2015). Within this broader perspective, argumentative writing is conceptualised as a cognitively demanding, literacy-embedded activity that draws on disciplinary reading practices, source integration, and reasoning across texts (Mateos et al., 2018; Cheong et al., 2021; van Weijen et al., 2019). Accordingly, this line of research includes both the study of predictors of successful argumentative writing, such as motivation, metacognitive strategies, problem-solving ability, cognitive flexibility (Nesbit et al., 2019; Allen et al., 2019; Arroyo González et al., 2020; Murtadho, 2021; Almashour and Davies, 2023; Hutasuhut et al., 2023), and the analysis of limitations associated with learners who experience academic or cognitive difficulties, including low-achieving students, basic writers, and those with constrained linguistic or strategic resources (Hisgen et al., 2020; MacArthur et al., 2019; Ozfidan and Mitchell, 2020; Mallahi, 2024; Wang and Said, 2024).

The early stage (2016–2019) is represented by students, writing skills, reading comprehension, grammar, syntax, critical thinking disposition, and cognitive processes. From 2020 to 2022, the focus shifts to self-regulation, problem solving, motivation, pre-service teachers and classrooms. From 2023 to 2024, predictive validity and cognitive load research emerge. On the density map, the lower left sector shows a moderate concentration around students and writing skills, reflecting significant, yet more evenly distributed, interest, without the pronounced peaks that are characteristic of Clusters 1 and 2.

Thematic Сlusters by Researchers

The Vosviewer program analysed and grouped all the studies’ metadata into 22 clusters. We consolidated these detailed into 4 enlarged thematic clusters: (1) Theoretical and methodological foundations of argumentation in academic writing; (2) Evaluation and analysis of argumentative writing; (3) Sociolinguistic and interlinguistic aspects; (4) Cognitive-psychological and educational determinants of argumentation (Appendix 2).

While the VOSviewer mapping provided a valuable macro-level visualization of keyword co-occurrences and identified four broad metadata-based thematic clusters, it could not fully capture how argument is conceptualized, modelled, taught, and assessed across the reviewed literature. A subsequent full-text qualitative synthesis of the included studies (n = 95) therefore enabled a more interpretive and content-sensitive structuring of the field. This analysis produced five analytical clusters: (1) Nature of argumentation; (2) Argumentative writing and its aspects; (3) Models of arguments; (4) Critical thinking as a tool for argumentative writing; and (5) Digitalisation and AI tools for argumentative writing. Together, these clusters provided a more differentiated account of the field than metadata mapping alone.

The qualitative mapping further showed that the studies differ not only in thematic focus, but also in their definitions of argumentative writing, their preferred models of argument, and the criteria used to operationalize argument quality, including in digitally mediated contexts. Viewed together, these dimensions position argument theory, assessment practices, and technology-enhanced writing support as interconnected rather than isolated strands of research.

Cluster 1. Nature of Argumentation

Argumentation refers to a scholarly form of communication through which writers address controversial questions and manage differing viewpoints in order to move toward reasoned resolution (Van Eemeren and Grootendorst, 2004). It involves taking a position and working to make that position more acceptable to an academic audience (Leitão, 2001) by advancing clearly stated claims supported through evidence and explicit reasoning (Jin et al., 2020). As a textual genre, argumentation is integral to the construction of scientific articles and functions as a key means by which writers participate in, and are initiated into, disciplinary knowledge and its communicative practices (Arroyo González et al., 2020). In doing so, academic argumentation operates as a rhetorical process that relies on structural logic, i.e. presenting opinions, supporting them with facts, and incorporating counteractive moves, while using appropriate tone, voice, and language to convince readers within scholarly debate (Rapanta and Macagno, 2019; Yasuda, 2023).

Argumentation is a rhetorical and communicative practice that is used to address controversial issues and resolve differences of opinion. It involves taking a stance on an issue and defending it within an ongoing dialogue (Van Eemeren and Grootendorst, 2004; Musa, 2019). This is typically achieved by using structural logic to present opinions, support them with facts and incorporate counteractive moves (Ferretti and Fan, 2016; Rapanta and Macagno, 2019; Yasuda, 2023). Argumentation is integral to scientific articles, functioning as a means of conveying disciplinary knowledge and its communicative practices (Arroyo González et al., 2020).

Argumentation is also understood as an attempt at rational persuasion. In this context, writers provide reasons to strengthen or reject an opinion, position, or idea, and demonstrate the truth or untruth of a statement using rhetorical strategies (Mulyati and Hadianto, 2023; Murtadho, 2021). However, developing written argumentation requires additional complexity and control of written conventions, as it is not simply a case of transferring oral arguments into writing (Hsin and Snow, 2017). As argumentation is fundamental to critical thinking and effective participation in society and academia, it is considered a vital lifelong skill and educational objective, helping learners to formulate reasoned opinions and manage increasingly complex knowledge (Tankó and Csizér, 2018; Musa, 2019; Ekalia et al., 2025). High-quality argumentation depends on supporting reasons and evidence; a claim cannot stand alone without justification, otherwise it remains mere opinion. Weaknesses in reasons and evidence may also reflect limited background knowledge of the topic (Widyastuti, 2018).

Cluster 2. Argumentative Writing and Its Aspects

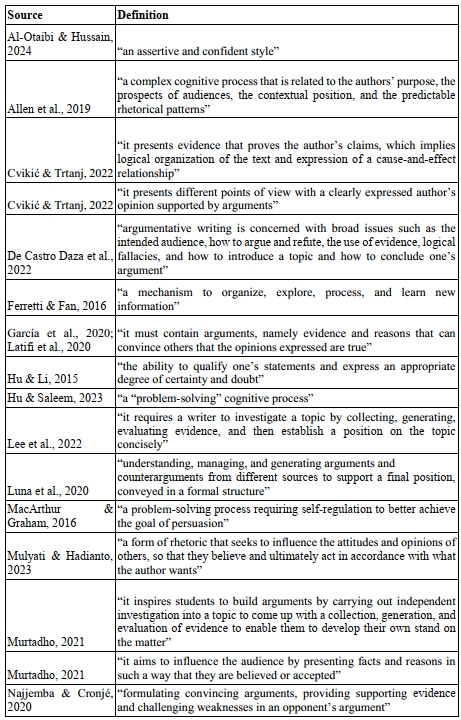

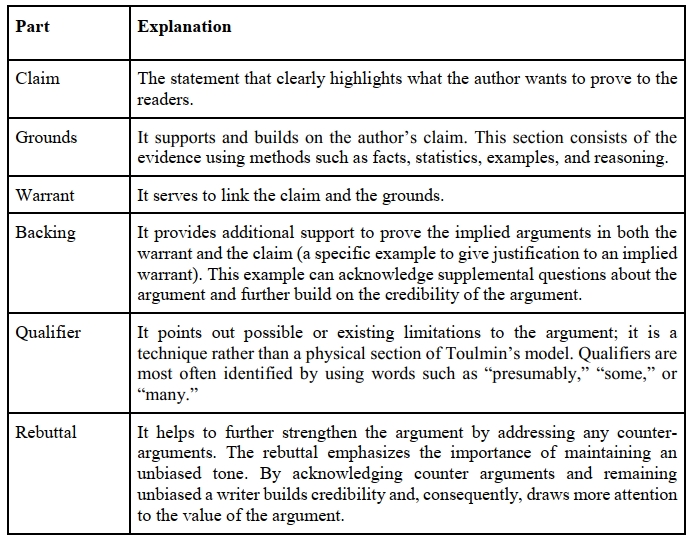

Across the reviewed sources, “argumentative writing” is defined through different emphases rather than a single shared formulation. Some authors treat it primarily as persuasion and rhetorical influence on the reader (Yang, 2016; Mulyati and Hadianto, 2023; Murtadho, 2021), while others frame it as a cognitively demanding problem-solving activity that involves inquiry, evidence evaluation, and self-regulation (MacArthur and Graham, 2016; Hu and Saleem, 2023; Lee et al., 2022; Pei et al., 2017). Another group places greater weight on dialogic engagement, including refutation, counterargument, and audience responsiveness (Najjemba and Cronjé, 2020; De Castro Daza et al., 2022; Robillos and Art-in, 2023). Table 2 compiles the definitions extracted from the mapped studies and illustrates this variation in construct emphasis.

Table 2. Definitions of argumentative writing

Таблица 2. Определения аргументативного письма

The definitions share a common core but differ in what is treated as decisive, whether stance and persuasion, evidential justification, or interactional positioning among voices (Allen et al., 2019; De Castro Daza et al., 2022; Su et al., 2023). These differences feed directly into later operational choices, including coding schemes, rubrics, and automated indicators used to represent “argument quality” (Stapleton and Wu, 2015; Lu and Swatevacharkul, 2021; Shi et al., 2022).

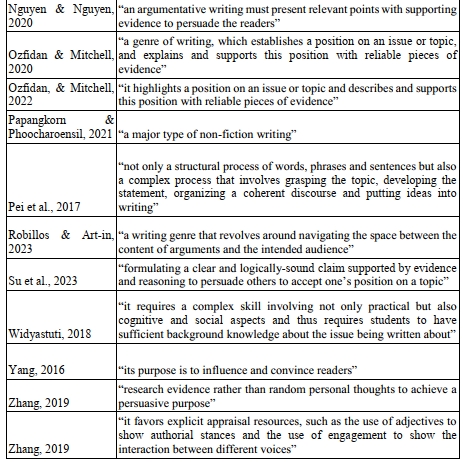

On the basis of the definitions summarised in Table 2, we derived a set of recurring characteristics that appear across the corpus. The resulting profile covers rhetorical organisation, evidential and inferential work used to justify claims, and dialogic engagement with alternative positions and audience expectations (Allen et al., 2019; De Castro Daza et al., 2022; Najjemba and Cronjé, 2020). Table 3 presents these characteristics together with the sources that motivate each feature.

Table 3. Characteristics of argumentative writing

Таблица 3. Характеристики аргументативного письма

The profile in Table 3 indicates that argumentative writing is treated as a composite construct. Studies that use similar labels may therefore rely on different combinations of structural, reasoning-based, and dialogic criteria, which reduces comparability unless operational definitions are made explicit (Stapleton and Wu, 2015; Shi et al., 2022; Rapanta and Macagno, 2019).

Culture and Language Related Aspects in Argumentative Writing

Argumentative writing places heavy cognitive and rhetorical demands on writers even in the first language. To produce an effective argumentative paragraph, writers must choose among metadiscourse resources and use them in a controlled way to manage stance, guide the reader, and sustain a coherent line of reasoning (Hatipoglu and Algi, 2018). In a second or foreign language, these demands intensify. L2 writers often have a narrower repertoire of metadiscourse markers and may be less confident in expressing nuance or calibrating engagement. Under such constraints, novice writers may fall back on familiar routines and transfer preferred patterns from L1 into L2 texts (Hatipoglu and Algi, 2018).

Argumentative performance is shaped not only by individual linguistic resources but also by cultural and cross-linguistic genre conventions. Genres develop within educational and rhetorical traditions, and their typical expectations can differ across languages and cultures (Graham and Rijlaarsdam, 2016). At the same time, the degree of divergence appears to depend partly on how closely related the languages are (van Weijen et al., 2019). In practical terms, this means that conventions for stating claims, signalling certainty, and incorporating opposing views may not align across contexts, and multilingual writers must learn to adjust their argumentative choices to unfamiliar norms.

These issues become especially visible in source-based argumentative writing. Here, success depends on more than general writing ability and L2 proficiency. Students must acquire practices in at least two domains: argumentation and reasoning, and the use of sources as evidence, including selection, integration, evaluation, and attribution in ways that meet academic conventions (Ferretti and Fan, 2016; van Weijen et al., 2019; Arroyo González et al., 2020). The task therefore requires students to construct justified claims while also building an evidential chain that is transparent to the reader and defensible within scholarly norms.

Cross-language research further suggests that some aspects of writers’ argumentative behaviour may be relatively stable across languages. Van Weijen et al. (2019), for example, report that writers may reproduce similar surface features across tasks in different languages, including the use of titles or reference lists, which points to transfer across writing contexts. At the same time, composing in an L2 can prompt simplification in argumentative design. In L2 settings, writers may rely more heavily on a canonical structure that foregrounds source-based support for a single position while leaving limited space for counterarguments and rebuttals (van Weijen et al., 2019). Such patterns reduce dialogic depth and can weaken the appearance of engagement with alternative perspectives, a dimension often valued in academic argumentation.

For students whose first language is not English, an additional difficulty lies in expressing positions accurately and appropriately within English academic discourse (Amini Farsani et al., 2025). Together with cultural and rhetorical differences, this helps explain why reasoning and argument quality are frequently reported as problematic in L2 contexts. Cheong et al. (2021) emphasise that sustained, reasonable argumentation is demanding even in L1 and becomes more difficult in an L2, where limitations in linguistic resources can constrain reasoning performance. Empirical work likewise indicates that L2 academic writing often shows weaker reasoning than expected for higher education study (Cheong et al., 2021), and difficulties with argumentation are reported not only for L2 writers but also for many L1 writers, with L2 learners often facing persistent challenges across their programmes (Tanko and Csizer, 2018). These findings point to the need for instruction that goes beyond accuracy. It must address metadiscourse control, shifting genre expectations, cross-cultural rhetorical norms, and the reasoning and source-use practices on which academic argumentation depends.

Teaching Argumentative Writing

Argumentative competence is widely treated as a core condition of academic development because it enables students to articulate a position, evaluate claims, detect weak reasoning, and negotiate competing viewpoints through counterarguments and rebuttals (Cheong et al., 2021; Sánchez-Peña and Chapetón, 2018; Kaewneam, 2025). Written argumentation presupposes an intellectual exchange between writer and reader in which alternative perspectives are interpreted, weighed, and addressed through reasoned responses (Sánchez-Peña and Chapetón, 2018). Beyond academic performance, sustained work in this genre is associated with problem solving and independent critical thinking, capacities that support informed participation in social life (Hisgen et al., 2020; Akib et al., 2024). Argumentative writing can also strengthen students’ academic agency by requiring them to locate credible sources and to summarise and synthesise evidence in support of an explicitly justified stance (Thompson, 2021). For second language learners, this form of literacy often functions as a fundumental, helping them align emerging academic skills with the argumentative practices of academic discourse communities (Amini Farsani et al., 2025).

At the same time, argumentative writing places heavy cognitive demands on learners because it requires coordination of language resources, reasoning, and topic knowledge. These demands become particularly salient in EFL contexts (Liu et al., 2023; Ekalia et al., 2025). When students lack awareness of what constitutes effective writing, the quality of their argumentative texts tends to deteriorate, even when they possess relevant ideas (Rubiaee et al., 2019). Novice writers often face additional constraints. They may enter higher education with limited guidance in argumentation, their prior educational experiences may not match university expectations, and many hesitate to take a clear position or rely on a one-sided understanding of argument that leaves little room for alternative perspectives (Kleemola et al., 2022). In empirical accounts, written argumentation is often described as slow to develop, with student drafts frequently exhibiting weak quality and difficulty in producing strong arguments (Allen et al., 2019). Recurrent shortcomings include weak evaluative standards, limited supporting proof, and underdeveloped engagement with counterarguments, which together point to the need for targeted instructional support (Ferretti and Lewis, 2019). More generally, younger or less experienced writers tend to find argumentative writing more challenging than narrative writing, consistent with the view that argumentation is an intellectually demanding problem (van Weijen et al., 2019; Ferretti and Fan, 2016).

Instructional expectations in academic argumentation centre on position taking supported by credible evidence. In many higher education writing tasks, students are required to adopt a stance and justify it through research drawn from reliable sources (Setyowati et al., 2017). Evidence use involves more than citation; it requires selecting information that can plausibly validate an argument and making its relevance explicit (Kibler and Hardigree, 2017). This is the point at which warranting becomes critical. Students must not only present data but also establish why that data is significant and sufficient for the claim at hand (Weyand et al., 2018). Source based argumentative writing adds further complexity because writers must build a coherent argument from reading or listening materials while simultaneously analysing and evaluating content knowledge (Cheong et al., 2021). In addition, instruction must incorporate academic integrity and credibility. Learners need guidance in strengthening claims with authoritative sources and in treating citation practices as ethical scholarly obehaviour rather than mechanical formatting (Uzun, 2024).

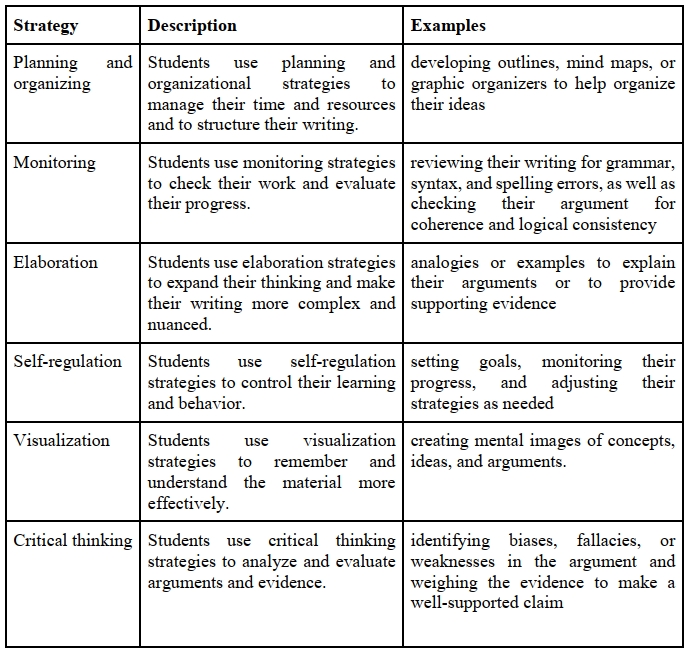

Learners’ difficulties are often visible in a recurrent set of problem areas. Students may struggle to formulate a defensible thesis, organise ideas coherently, provide sufficient and appropriate evidence, and address counterarguments with adequate reasoning. Difficulties also arise at the level of writing process, including planning, revision, time management, and control of mechanics (Almashour and Davies, 2023). In EFL research, cognitive challenges are frequently grouped into limited command of argument structure, weak critical thinking, and insufficient feedback conditions (Wang and Said, 2024). Against this background, strategy instruction is discussed as a practical mechanism for supporting task understanding, idea organisation, and development of coherent argumentation (Almashour and Davies, 2023). Table 4 summarises strategy categories that recur in the literature and illustrates how they translate into concrete classroom practices.

Table 4. Cognitive strategies for argumentative writing tasks[6]

Таблица 4. Когнитивные стратегии заданий по аргументативному письму

The strategy categories in Table 4 clarify why effective teaching cannot be reduced to a generic template. Planning and monitoring support structural control and coherence, elaboration strengthens evidential development, and critical evaluation targets the quality of reasoning. In EFL settings, where cognitive load is typically higher, these supports become central rather than auxiliary (Liu et al., 2023; Wang and Said, 2024).

Teaching practices therefore need to be explicit, strategic, and responsive to students’ academic histories. Higher education students arrive with uneven exposure to argumentation, yet they are expected to construct arguments that take multiple positions into account (Robillos and Art-in, 2023). Research links effective instruction with explicit modelling and scaffolded practice, especially when the aim is to develop reasoning depth rather than formulaic responses. This also requires adaptive teaching expertise, namely flexible knowledge of strategies that can be adjusted to learners with different educational trajectories (Athanases et al., 2015; Newell et al., 2017). Counterargumentation is frequently underdeveloped, and several authors therefore recommend teaching counterargument explicitly so that students do not default to arguing against a source text without analytical engagement (Cheong et al., 2021). Instruction also benefits from direct work with warrants, backings, and coherent sequencing of argument components (Lesterhuis et al., 2018). In addition, familiarising students with relational claim types and practising support appropriate to those claim types may broaden rhetorical repertoires and lead to greater variation in essay structures (Tankó and Csizér, 2018).

Different orientations toward evidence use and warranting have been described as shaping instructional priorities. Weyand et al. (2018) distinguish three argumentative epistemologies. A structural approach treats argument construction as application of rules that yield a predetermined structure (De La Paz et al., 2012, as cited in Weyand et al., 2018). An ideational approach frames evidence-based writing as a means of developing, organising, and presenting ideas (Langer and Applebee, 1987, as cited in Weyand et al., 2018). A social process approach emphasises purpose driven argumentation situated in social contexts (Ivanič, 2004, as cited in Weyand et al., 2018). This distinction helps explain why teaching cannot be limited to naming “parts” of an argument. It must also address what arguments are for, how evidence functions in context, and how writers anticipate readers’ perspectives.

Tools and assessment practices can support teaching, although they may also narrow what is attended to if they are used as substitutes for instruction rather than as supports. Argument mapping is often presented as a useful aid in source-based tasks, particularly when students must work with diverse materials under time constraints. Mapping can help learners organise information, clarify perspective space, and make inferential relations explicit (Robillos and Art-in, 2023; Robillos and Thongpai, 2022; Davies et al., 2021). Automated writing evaluation is discussed in a similar enabling register. Content focused AWE feedback may support baseline knowledge about evidence use, including what constitutes a complete argument and how sources and reasons can be incorporated. At the same time, more advanced judgements about evidence relevance and persuasiveness appear to depend on collaborative work and guided interpretation rather than automation alone (Shi et al., 2022). Several authors therefore caution against evaluating written argumentation solely by counting argument elements, because such procedures can mask deficits in reasoning quality and responsiveness to alternative positions (Cheong et al., 2021). The complexity of argumentative reasoning and the difficulty of assessing it transparently may partly explain why argumentation remains underrepresented as a teaching tool in many academic writing handbooks and courses (Rapanta and Macagno, 2019).

Teaching argumentative writing therefore involves more than improving language accuracy or enforcing a standard form. It requires sustained support for responsible position taking, credible evidence use, explicit warranting, and engagement with alternative viewpoints through modelling, scaffolded practice, and feedback that targets both structure and reasoning depth (Cheong et al., 2021; Lesterhuis et al., 2018; Wang and Said, 2024). When these conditions are met, argument writing instruction can contribute not only to stronger academic performance but also to broader capacities for critical participation, including source evaluation and evidence-based judgement (Hisgen et al., 2020; Thompson, 2021).

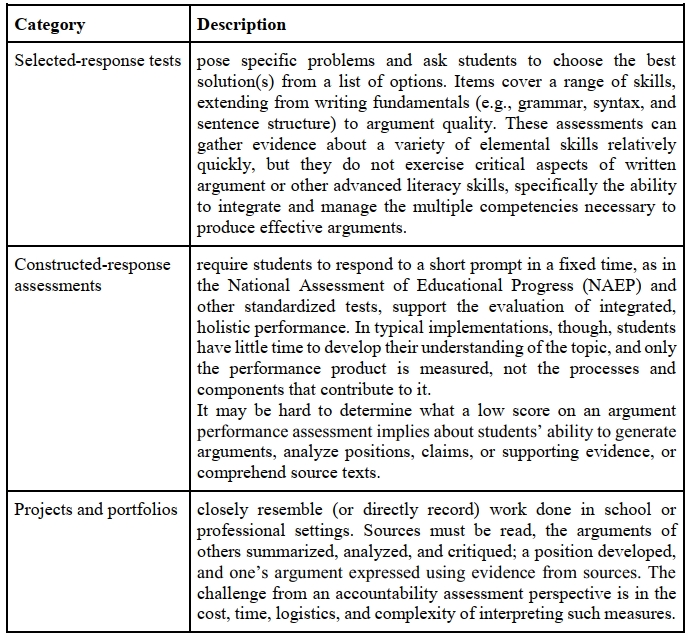

Assessment of Argumentative Writing Skills

Argumentative writing is among the most frequently used genres in classroom writing practice and formal writing assessment. In authentic academic settings, producing an argumentative text typically requires writers to evaluate, select, and use content knowledge from sources, rather than relying on personal opinion alone (Cheong et al., 2021). Persuasiveness therefore depends on whether claims are supported by an adequate evidential basis and on whether the text makes the reasoning that links evidence to claims explicit. For this reason, the assessment of argumentative writing cannot be reduced to general language accuracy. It must address evidence use and justificatory reasoning as core dimensions of performance (Paris et al., 2025).

In accountability settings, assessments of written argument are commonly organised into three broad formats: selected response tests, constructed response tasks, and projects or portfolios (Deane et al., 2018). These formats differ in the kind of evidence they provide about argumentative competence and in how confidently a score can be interpreted as reflecting students’ ability to produce extended argumentation. Table 5 summarises the three formats and the principal interpretive constraints associated with each.

Table 5. Assessments of written argument[7]

Таблица 5. Оценка письменного аргумента

As Table 5 indicates, the three formats vary in what they can capture. Selected response tasks can efficiently sample knowledge about argument features, whereas constructed responses and portfolio-based work provide stronger evidence of how students coordinate claims, evidence, counterpositions, and rebuttals in extended writing. This distinction becomes consequential in high stakes assessment contexts, where argumentative performance is used to support decisions about students’ readiness for academic work and progress within programmes (Zhang and Zhang, 2021).

Because argument quality is difficult to evaluate in a transparent and defensible way, particularly in foreign language settings, assessment practice depends on clearly specified criteria. In writing assessment, well designed rubrics are therefore essential, because they articulate the criteria by which performance is judged and help separate language control from the quality of argumentation (Ozfidan and Mitchell, 2022; Paris et al., 2025). At the same time, rubric design makes visible a central difficulty in the field. Studies suggest that students may produce texts containing numerous formal argument elements while still demonstrating weak reasoning. Abdollahzadeh et al. (2017), for example, report that the frequency of argument components can be relatively high even when reasoning quality remains limited. This implies that assessment should not rely on counting elements alone, but should adopt integrative criteria that attend to relevance, justification, and engagement with alternative viewpoints as part of audience-oriented persuasion (Abdollahzadeh et al., 2017; Paris et al., 2025).

One response to this challenge is to combine structural and reasoning focused criteria within analytic scoring. Stapleton and Wu (2015) propose the Analytic Scoring Rubric for Argumentative Writing, which integrates evaluation of structural components with judgement of reasoning quality. Rubrics of this kind are designed to distinguish the superficial presence of argumentative elements from deeper competence in justification, relevance, and responsiveness to counterpositions, thereby strengthening the interpretability of assessment outcomes (Stapleton and Wu, 2015; Abdollahzadeh et al., 2017).

Alongside human scoring, technology is increasingly discussed as a means of expanding feedback capacity. Automated systems can, in principle, support learners by providing argumentation focused feedback that is less constrained by instructor availability and can be integrated into iterative revision cycles (Wambsganss et al., 2020). Such feedback becomes particularly relevant when instructional aims prioritise improvement in evidence use, justificatory reasoning, and engagement with counterarguments, which rubrics and classroom assessments are intended to capture.

Assessment is also closely tied to instruction. Writing programmes and writing across the curriculum initiatives are encouraged to provide sustained opportunities for students to participate in argumentative practices in which they evaluate claims, justify positions, attend to contradictory views, and develop counterarguments and rebuttals (Abdollahzadeh et al., 2017). In this sense, assessment functions not only as measurement, but also as a mechanism that shapes what kinds of evidence-based reasoning students learn to produce in academic contexts.

Cluster 3. Models of Arguments

Argumentation has long been recognised as a central component of academic writing; however, it is not a unitary or universally defined construct. Across disciplines and research traditions, scholars have proposed diverse models of argument that emphasise different structural, rhetorical, cognitive, and social dimensions of argumentative practice (e.g., Toulmin, 1958; Rogers, 1961; Sanchez-Peña and Chapeton, 2018; Mateos et al., 2018; Musa, 2019). These models range from formal and logical representations of claims, evidence, and warrants to dialogic, genre-based, and socio-cognitive approaches that foreground audience engagement, stance construction, and contextual meaning-making (Stapleton and Wu, 2015; Zhang and Zhang, 2021; Geng et al., 2024). As a result, the field is characterised by conceptual plurality rather than theoretical consensus.

Within the mapped corpus, Toulmin based approaches are most frequently operationalised explicitly, particularly in studies focused on assessment, analytic coding of argument structure, and technology mediated writing support (Stapleton and Wu, 2015; Zainuddin and Shammem, 2016; Lu and Swatevacharkul, 2021). Classical rhetorical organisation is less often presented as an explicit model, yet it informs conventional essay structuring and is reflected in rubric based instructional contexts (Zhang, 2016; Ozfidan and Mitchell, 2022; Zhang and Zhang, 2021). Rogerian oriented principles appear mainly in studies adopting dialogic and sociocultural perspectives, especially where collaborative writing, perspective taking, and audience responsiveness are foregrounded (Musa, 2019; Hutasuhut et al., 2023; Najjemba and Cronje, 2020; De Castro Daza et al., 2022).

Models of argumentation are fundamental to argumentative writing because they offer structured representations of how reasoning is organised, communicated, and evaluated in academic discourse. By making explicit the components of argument and the relationships among claims, evidence, and justification, argument models enable systematic analysis of argumentative texts and provide analytical lenses for examining argument quality across contexts (Stapleton and Wu, 2015; Deane et al., 2019; McCarthy et al., 2022). From a research perspective, these models function as frameworks for describing and comparing argumentative performance across genres, disciplines, proficiency levels, and linguistic backgrounds (Abdollahzadeh et al., 2017; Cheong et al., 2021; van Weijen et al., 2019). In pedagogical contexts, argumentation models operate as cognitive and instructional scaffolds that support writers in externalising complex reasoning processes, anticipating alternative viewpoints, and aligning their texts with disciplinary expectations (Ferretti and Lewis, 2019; Luna et al., 2020; Lu and Swatevacharkul, 2021). Empirical studies further indicate that model-based instruction can enhance learners’ reasoning quality, evidence integration, and critical thinking in argumentative writing (Zainuddin and Shammem, 2016; Mateos et al., 2018; Kaewneam, 2025).

Importantly, different models foreground complementary dimensions of argumentative competence, including logical coherence, rhetorical persuasion, dialogic engagement, and epistemic stance (Rapanta and Macagno, 2019; Yasuda, 2023; Papangkorn and Phoocharoensil, 2021). Argument models also mediate between theory and practice by informing assessment frameworks, rubric development, and feedback practices, thereby promoting transparency and consistency in evaluating academic arguments (Ozfidan and Mitchell, 2022; Paris et al., 2025; Lesterhuis et al., 2018). In digital and AI-mediated learning environments, these models increasingly guide the design of adaptive feedback systems, argument mapping tools, and chatbot-based scaffolding for argumentative writing (Benetos and Bétrancourt, 2020; Guo et al., 2022; Su et al., 2023; Wambsganss et al., 2020). Consequently, the study and integration of argumentation models are not optional but essential for advancing both theoretical understanding and pedagogical practice in argumentative writing within higher education.

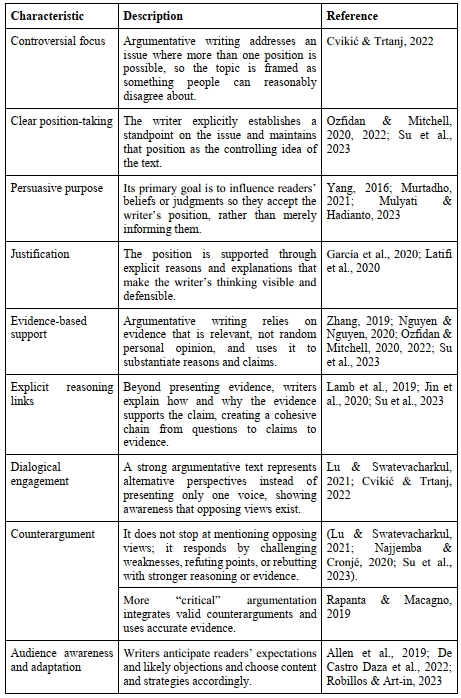

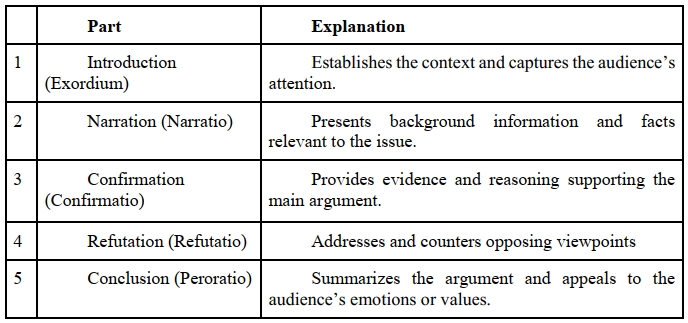

The Classical (Aristotelian) Argument Model

The Classical Argument Model originates in classical rhetoric, most notably in the works of Aristotle and Cicero, and represents one of the earliest systematic frameworks for persuasive communication (Lawson-Tancred, 1992). It conceptualises argumentation as a structured, goal-oriented process aimed at persuading an audience through logical coherence, clarity of reasoning, and effective organisation. The classical argument structure is a persuasive writing format that consists of five main parts: introduction, narration, confirmation, refutation, and conclusion (Table 6). Owing to its emphasis on orderly reasoning and persuasive effectiveness, this model has been widely applied in philosophy, rhetoric, law, and academic and persuasive writing.

Table 6. Classical argument model[8]

Таблица 6. Классическая модель агрумента

In the contemporary research corpus on academic argumentative writing, however, the Classical model is not explicitly foregrounded as an analytical or pedagogical framework. Instead, it functions primarily as a theoretical background tradition that informs later models of argumentation (Zhang, 2016; Rapanta and Macagno, 2019). None of the reviewed empirical studies adopts Aristotelian categories such as ethos, pathos, and logos as formal coding schemes or assessment criteria. Rather, classical principles surface indirectly in discussions of claims, evidence, persuasion, audience awareness, and logical coherence.

Several studies implicitly draw on Aristotelian reasoning without naming the model directly. For example, Abdollahzadeh et al. (2017) analyse argumentative writing behaviour through claims, reasons, and evidence. Although their analytical framework is largely Toulmin-inspired, the underlying concern with rational persuasion and coherent reasoning reflects classical rhetorical logic. Similarly, Stapleton and Wu (2015) distinguish between surface textual features and the substantive quality of arguments, foregrounding logos-driven persuasion and argumentative substance (central concerns of Aristotelian rhetoric). Foundational overviews of argumentative writing by Ferretti and Fan (2016) and Ferretti and Lewis (2019) situate modern instructional and cognitive approaches within a broader rhetorical tradition, explicitly acknowledging classical rhetoric as the historical foundation upon which contemporary models are built.

Overall, the Classical Argument Model occupies a historical and conceptual role within the corpus rather than serving as an active methodological choice. Its influence is mediated through later frameworks (most notably Toulmin’s model) which offer greater analytical explicitness and pedagogical operability. This pattern reflects a broader disciplinary shift in academic writing research from rhetorically general models toward analytically precise and empirically tractable approaches, while still retaining classical rhetoric as an enduring intellectual foundation for understanding persuasion, reasoning, and audience engagement in academic argumentation.

Critical Literacy Argument

Sánchez-Peña and Chapetón (2018) do not propose a formal, text-analytic classification of arguments (e.g. Toulminian or Aristotelian). Instead, they advance a critical-dialogic re-conceptualisation of argumentation, where arguments are classified implicitly through their social function, ethical orientation, and dialogic purpose, rather than through structural components (Table 7). Their classification of arguments emerges qualitatively from learners’ writing practices within a critical literacy framework. Thus, argument types are characterized by how participants construct and support claims as well as the discursive means they use to make their arguments persuasive and contextually grounded.

Table 7. Critical-dialogic argument classification[9]

Таблица 7. Критико-диалогическая классификация аргумента

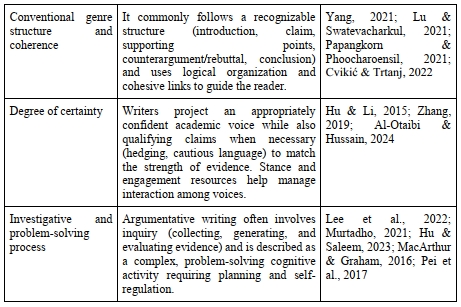

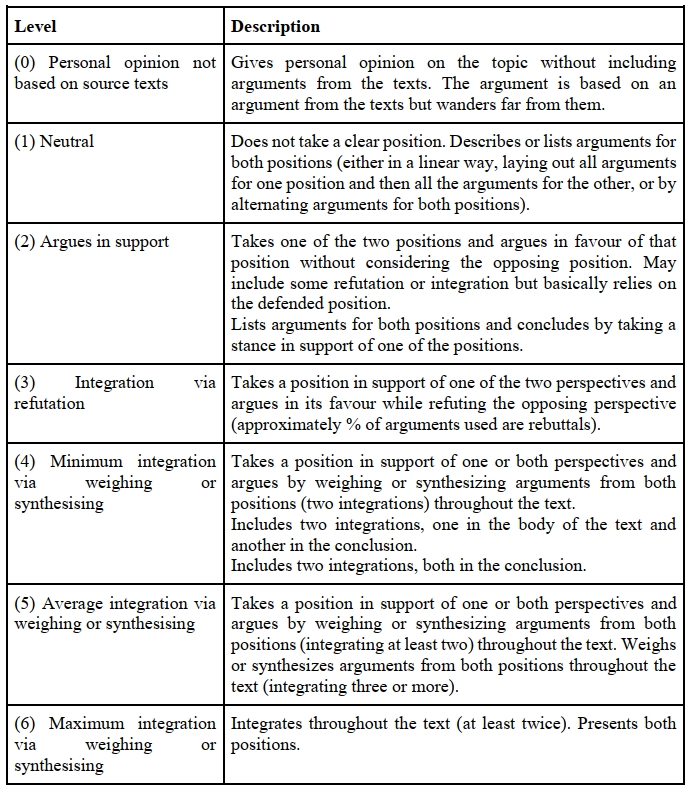

Classification Based on the Level of Argument Integration

To operationalise argumentative writing quality, some studies use classifications that focus on how writers integrate perspectives and information drawn from multiple sources. A widely cited example is an integration-level classification. Mateos et al. (2018) describe argument quality as a continuum of epistemic integration, capturing how writers incorporate, relate, and transform potentially conflicting information from sources. In this model, higher levels correspond to more coordinated perspective management and more advanced knowledge construction, providing an analytic basis for distinguishing superficial juxtaposition from integrative synthesis (Table 8).

Table 8. Argument classification based on its level of integration[10]

Таблица 8. Классификация аргумента, основанная на уровне его внедрения

Although integration scales make the construct measurable, they also demonstrate why “argument quality” is not necessarily comparable across studies. For example, text may score highly on integration but remain weak on evidential justification, or vice versa, depending on the criteria adopted.

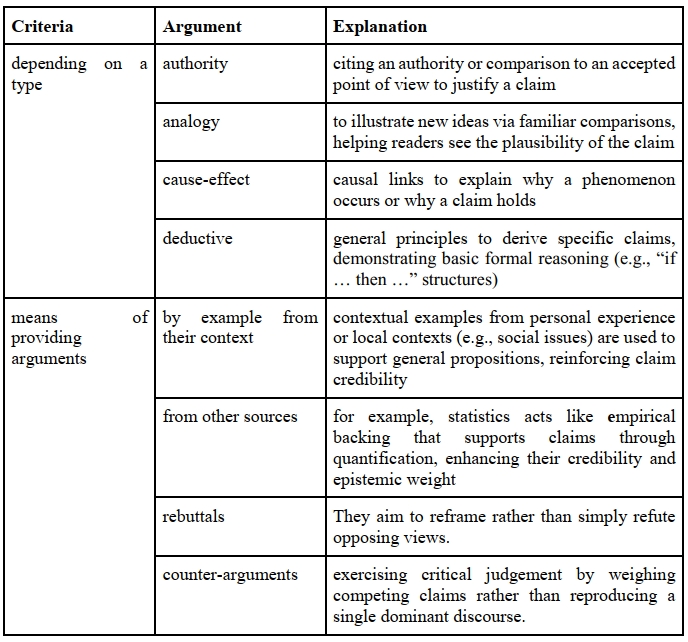

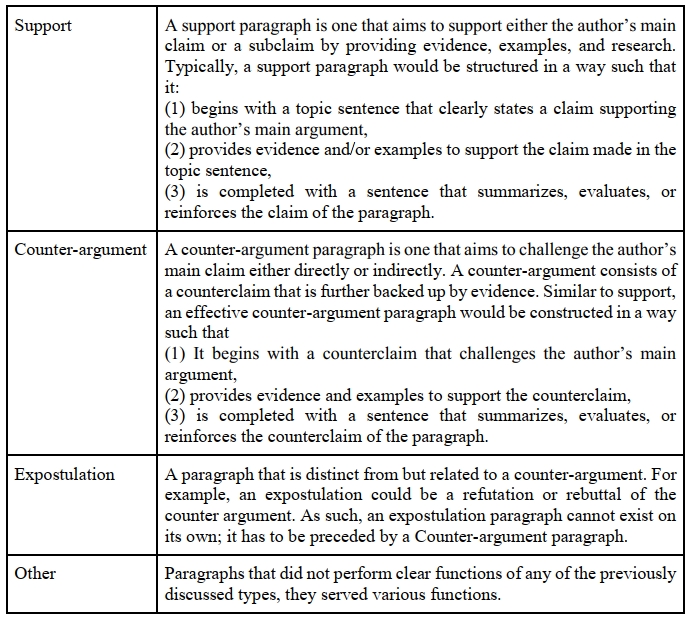

Functional Classification of Argument

In addition to component-based and integration-based approaches, the corpus includes functional classifications that treat argumentation as a distribution of discourse roles across extended text units. McCarthy et al. (2022), for example, operationalise argument structure by identifying paragraph functions such as support, counter-argument, and related moves (Table 9). This perspective is designed for corpus-scale analysis and foregrounds how argumentative work is staged across a text rather than how individual claims are diagrammed.

This classification differs from Classical and Toulmin-based frameworks in that it does not decompose arguments into components such as claims, warrants, and rebuttals. Instead, it operationalises argumentation at the level of extended discourse units by assigning functional roles to paragraphs or segments. This approach supports systematic comparison of linguistic and rhetorical features across supportive and counter-argumentative discourse within student texts (Table 9).

Table 9. Functional argument classification[11]

Таблица 9. Функциональная классификация аргумента

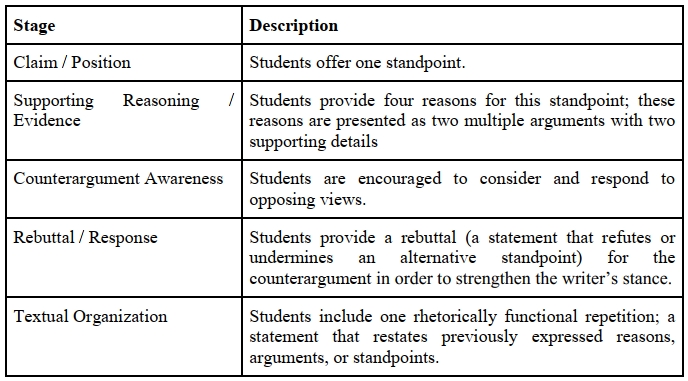

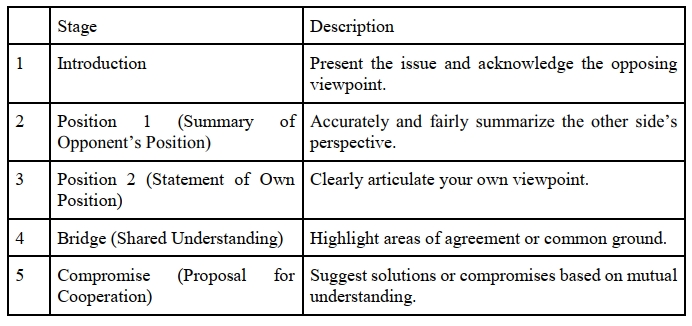

Operational Structure of Arguments

Some studies define argument structure through pedagogical enactment rather than formal typology. Musa (2019), for instance, specifies an operational structure that connects argumentative elements to staged instructional activities and expected textual outcomes (Table 10). In this formulation, formal components of argument are coupled with dialogic competencies, including explicit attention to counterargument and rebuttal.